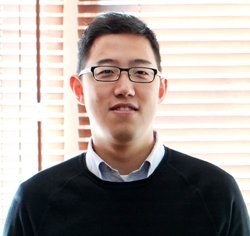

Database and Data Collection

Although machines are more pervasive in our everyday lives, we are still forced to interact with them through limited communication channels. Our overarching goal is to support new and complex interactions by teaching the computer to interpret the expressions of the user. Towards this goal, we present Vinereactor, a new labeled database for face analysis and affect recognition. Our dataset is one of the first to explore human expression recognition in response to a stimulus video, enabling a new facet of affect analysis research. Furthermore, our dataset is the largest of its kind, nearly a magnitude larger than its closest related work.

See our research publication here

Edward Kim and Shruthika Vangala, "Vinereactor: Crowdsourced Spontaneous Facial Expression Data",

International Conference on Multimedia Retrieval, 2016.

@inproceedings{kim2016vinereactor,

title={Vinereactor: Crowdsourced Spontaneous Facial Expression Data},

author={Kim, Edward and Vangala, Shruthika},

booktitle={International Conference on Multimedia Retrieval (ICMR)},

year={2016},

organization={IEEE}

}

Deep Learning

One of the most important cues for human communication is the interpretation of facial expressions. We present a novel computer vision approach for Action Unit (AU) recognition based upon deep learning. We introduce a new convolutional neural network training loss specific to AU intensity that utilizes a binned cross entropy method to fine-tune an existing network. We demonstrate that this loss can be more effectively and accurately trained in comparison to an L2 regression or naive cross entropy approach. Additionally, our model naturally represents the co-occurance of action units and can handle missing data through regression data imputation. Finally, our experimental results demonstrate the improvement of our framework over the current state-of-the-art.

See our research publication here

Edward Kim and Shruthika Vangala, "Deep Action Unit Classification using a Binned Intensity Loss and Semantic Context Model",

International Conference on Pattern Recognition, 2016.

@inproceedings{kim2016deep,

title={Deep action unit classification using a binned intensity loss and semantic context model},

author={Kim, Edward and Vangala, Shruthika},

booktitle={23rd International Conference on Pattern Recognition (ICPR)},

pages={4136--4141},

year={2016},

organization={IEEE}

}

Affect Transfer Learning

The key component of our research is the recognition that emotion classification of an image sequence is not an absolute truth, nor is it the sole dependent variable of an input video. In other words, machines cannot simply look at images and infer emotion; humans need to be in the loop. Some people will find a video amusing, whereas others may not. Thus, there reaction of human observers (as opposed to the actual video content) is much more correlated to solving the problem of emotion classification. If we can quantify the emotion displayed by a human watching these videos, we can attempt to transfer the emotion classified from the observer to the video.

Support

This research is supported by an AWS in Education Research Grant award.